Airflow is a Python based workflow tool published by Apache to allow you to create, schedule and monitor workflows programmatically. It's a common tool used in modern data engineering practice. This article show you how to install Airflow on your Windows 10 or 11 systems via WSL (Windows Subsystem for Linux).

Prerequisites

- WSL - Follow this article to enable it if you have not done that already.

- Python - from Airflow 2.0, Python 3.6+ is required. Follow Install Python 3.9.1 on WSL to install Python 3.9.1 on WSL.

Install Airflow on WSL

Now we can install Airflow in WSL.

Create AIRFLOW\_HOME variable

Add the following line to ~/.bashrc file to setup the variable:

export AIRFLOW_HOME=~/airflow

And then source it:

source ~/.bashrc

Install Airflow package

Run the following commands to install it:

AIRFLOW_VERSION=2.2.3PYTHON_VERSION="$(python --version | cut -d " " -f 2 | cut -d "." -f 1-2)"CONSTRAINT_URL="https://raw.githubusercontent.com/apache/airflow/constraints-${AIRFLOW_VERSION}/constraints-${PYTHON_VERSION}.txt"pip3 install "apache-airflow==${AIRFLOW_VERSION}" --constraint "${CONSTRAINT_URL}"

If you notice warning message like the following:

WARNING: The script airflow is installed in '/home/***/.local/bin' which is not on PATH.

Add the path to PATH environment variable so that you can use commands like airflow. You can do this by editing~/.brashrc.

export PATH=$PATH:~/.local/bin

Remember to source the new settings:

source ~/.bashrc

Initialize database

Run the following command to initialize the database:

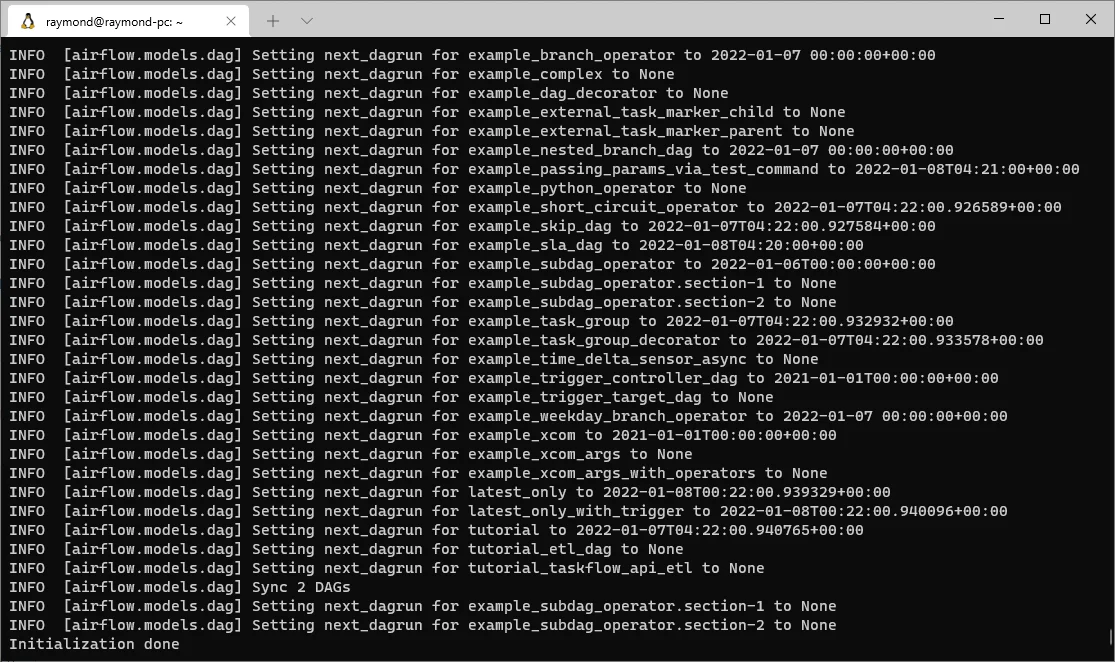

airflow db init

This command will create the AIRFLOW_HOME folder with configuration file and the default SQLite database for storing data. Check out file $AIRFLOW_HOME/airflow.cfg for all the configuration items.

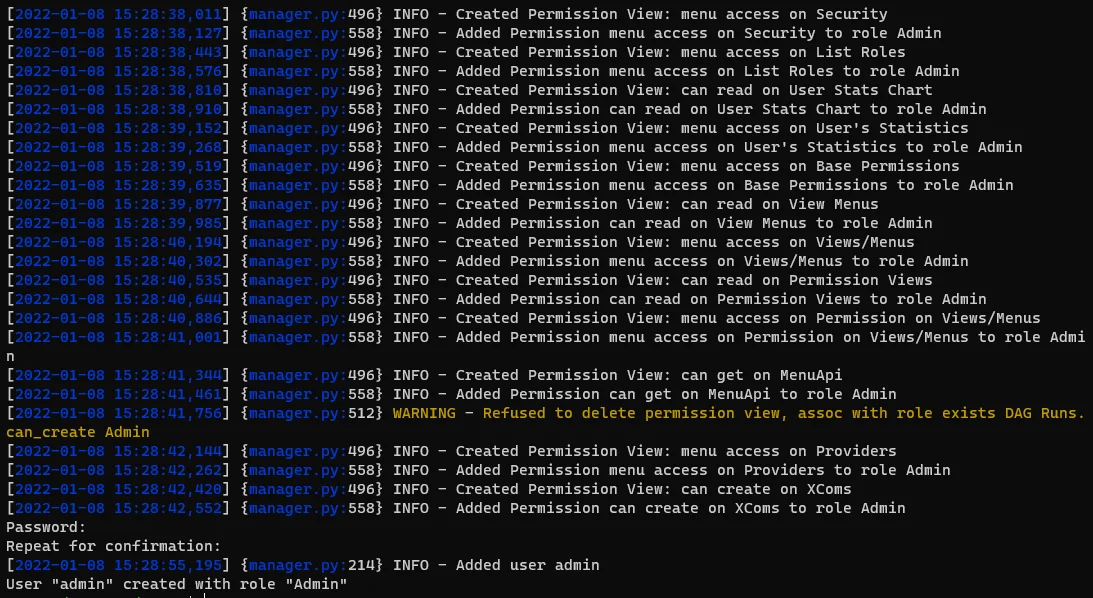

Create an admin user

Now let's create an admin account using the following command:

airflow users create \ --username admin \ --firstname Raymond \ --lastname Tang \ --role Admin \ --email ***@app.kontext.tech

You need to input a password for the user:

Start webserver service

Now you can run the following command to start the webserver service:

airflow webserver --port 8080

Remember to change the port number to a different one if it is already used by other services in your system.

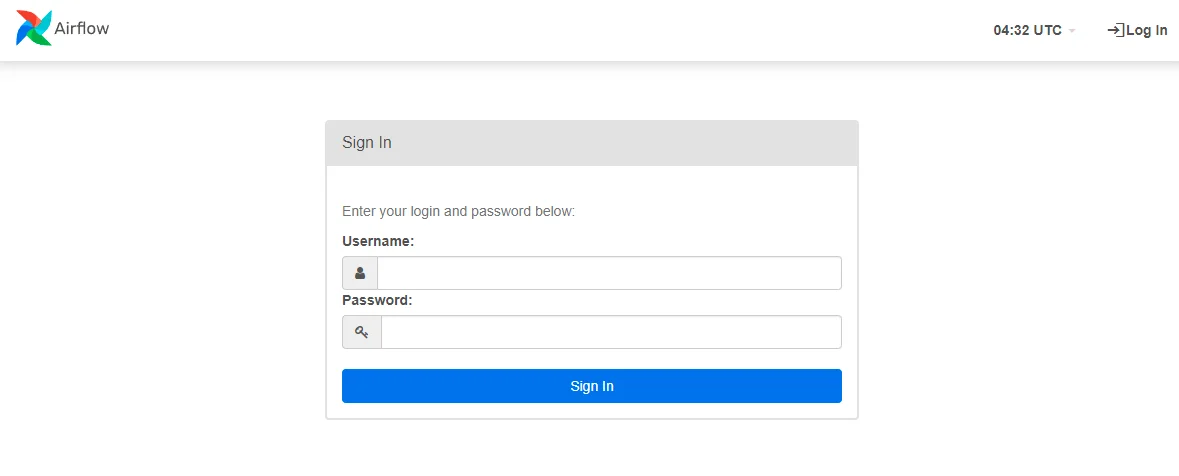

Open the website in browser: http://localhost:8080/

The login screen looks like the following screenshot:

Input these details to sign in:

- Username: admin

- Password: the password you input previously when setting up.

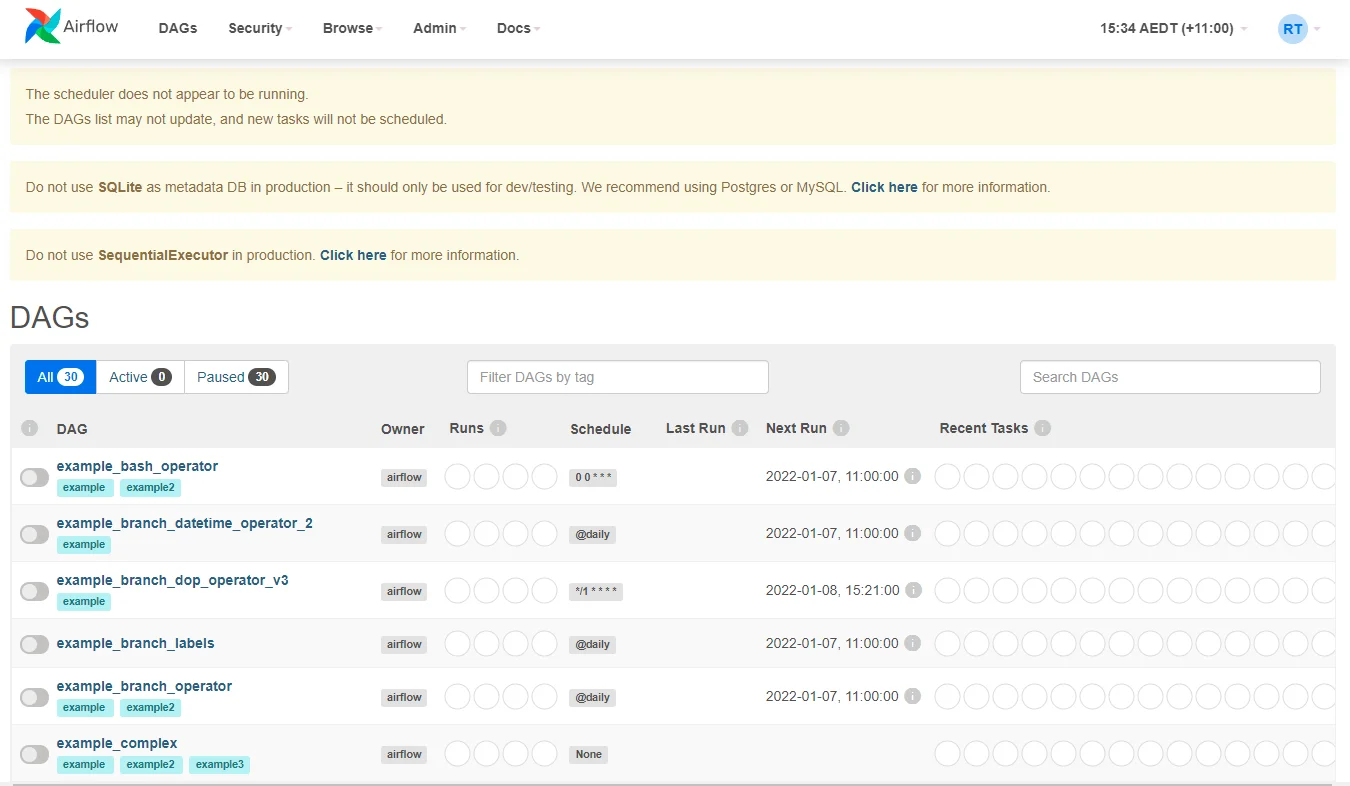

After login, the home page will show all the default example DAGs:

Start scheduler service

If you want to enable scheduling service, run the following command in a different Bash/WSL window:

airflow scheduler

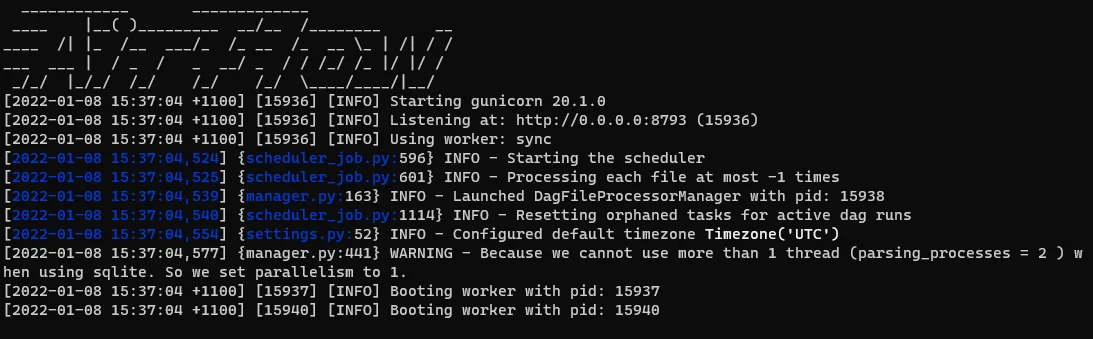

The command will print out these texts:

You can stop the service by press Ctrl + C.

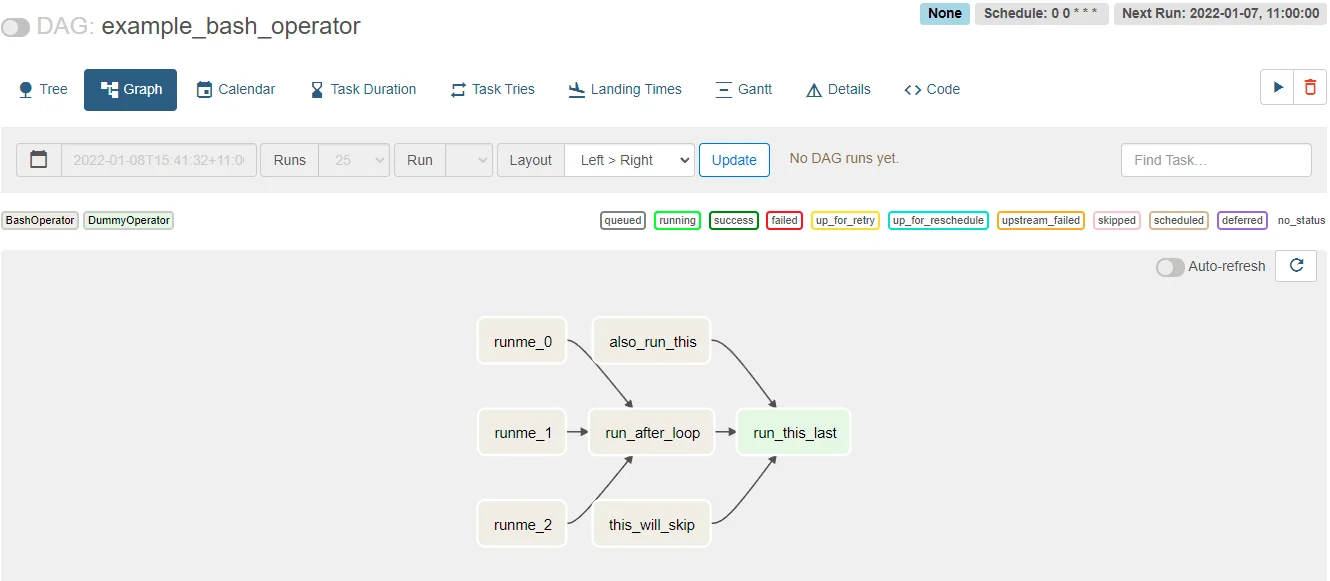

Run example workflow

We can run the built-in example workflow using airflow tasks run command:

airflow tasks runusage: airflow tasks run [-h] [--cfg-path CFG_PATH] [--error-file ERROR_FILE] [-f] [-A] [-i] [-I] [-N] [-l] [-m][-p PICKLE] [--pool POOL] [--ship-dag] [-S SUBDIR]dag_id task_id execution_date_or_run_id

Run this command:

airflow tasks run example_bash_operator runme_0 2022-01-08

The command will trigger task runme_0in DAG example_bash_operator:

Rou can also backfill jobs for historical dates:

airflow dags backfill --start-date "2022-01-01" --end-date 2022-01-05 example_bash_operator