Since Hive 3.x, new authentication feature for HiveServer2 client is added. When starting HiveServer2 service (Hive version 3.0.0), you may encounter errors like:

‘HiveServer2 metastore.RetryingMetaStoreClient: RetryingMetaStoreClient trying reconnect as [username] (auth:SIMPLE).

By looking into log details of Hive, you may find the following recommendation:

metastore.HiveMetaStore: Not authorized to make the get_current_notificationEventId call. You can try to disable metastore.metastore.event.db.notification.api.auth

By default, that configuration is configured as following:

<property> <name>hive.metastore.event.db.notification.api.auth</name> <value>true</value> <description> Should metastore do authorization against database notification related APIs such as get_next_notification. If set to true, then only the superusers in proxy settings have the permission </description> </property>

So to fix the issue, follow these two steps.

Step 1 - setup proxy settings

In Hadoop core-site.xml configuration file, add the following configurations:

<property> <name>hadoop.proxyuser.$superuser.hosts</name> <value>*</value> </property><property> <name>hadoop.proxyuser.$superuser.groups</name> <value>*</value> </property>

Replace $superuser with your user account. For me, I am running as user fahao. Thus I’ve added the following configuration:

<property> <name>hadoop.proxyuser.fahao.hosts</name> <value>*</value>< /property><property> <name>hadoop.proxyuser.fahao.groups</name> <value>*</value>< /property>

If I don’t add the above configuration, my user account won’t be able to impersonate hive user.

java.lang.RuntimeException: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.authorize.AuthorizationException): User: fahao is not allowed to impersonate hive (state=08S01,code=0)

Step 2 (optional) - Update Hive metadata configuration

Update your Hive configuration file hive-site.xml to include the following settings:

<property> <name>hive.metastore.event.db.notification.api.auth</name> <value>false</value> <description> Should metastore do authorization against database notification related APIs such as get_next_notification. If set to true, then only the superusers in proxy settings have the permission </description> </property>

Without this, you need to specify username when connecting to Hive using beeline:

beeline> !connect jdbc:hive2://localhost:10000/default -n username

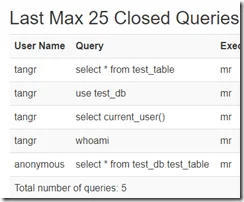

If anonymous connection is allowed, User Name will show as anonymous in HiveServer2 web UI otherwise the connected username will show there.

After these changes, restart Hadoop and HiveServer2 services, the problem should be resolved.

$HIVE_HOME/bin/hive --service metastore &$HIVE_HOME/bin/hive --service hiveserver2 &#Or$HIVE_HOME/bin/hiveserver2 &

Try connect to HiveServer2 via beeline CLI

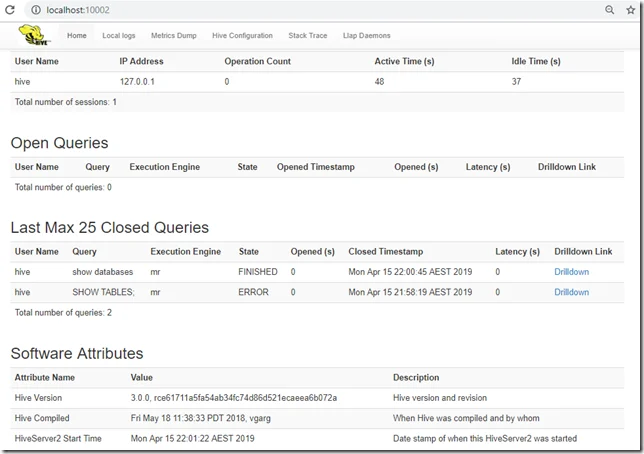

Once the service starts succesfully, you can view HiveServer2 Web UI via the following URL:

You can use beeline to query the Hive databases:

beeline> !connect jdbc:hive2://localhost:10000/default Connecting to jdbc:hive2://localhost:10000/default Enter username for jdbc:hive2://localhost:10000/default: hive Enter password for jdbc:hive2://localhost:10000/default: Connected to: Apache Hive (version 3.0.0) Driver: Hive JDBC (version 3.0.0) Transaction isolation: TRANSACTION_REPEATABLE_READ 0: jdbc:hive2://localhost:10000/default> show databases; +----------------+ | database_name | +----------------+ | default | | test_db | +----------------+ 2 rows selected (0.859 seconds)