This article provides detailed steps about how to compile and build Hadoop (incl. native libs) on Windows 10. The following guide is based on Hadoop release 3.2.1.

*The yellow elephant logo is a registered trademark of Apache Hadoop; the blue window logo is registered trademark of Microsoft.

Prerequisites

In the repository of Hadoop on GitHub, BUILDING.txt file provides the high level steps to build Hadoop on different environments. It also contains the prerequisites for each release. For different releases, the dependencies/prerequisites may be different. For example, Hadoop 3.2.2 requires ProtocalBuffer 3.0 while Hadoop 3.2.1 requires version 2.5.0.

For this guide, it targets Hadoop 3.2.1 build. Source code is based on branchhttps://github.com/apache/hadoop/tree/rel/release-3.2.1**[rel/release-3.2.1](https://github.com/apache/hadoop/tree/rel/release-3.2.1)**. The building file is located here. To summarise, the following are the requirements for build on Windows 10 (I will demonstrate how to install each of them in the following sections):

| Requirement | Comments |

|---|---|

| Windows 10 | I'm using Windows 10 x64 virtual machine for this guide. It's brand new machine and there is no programs installed on the machine. systeminfo OS Version: 10.0.10240 N/A Build 10240 System Type: x64-based PC |

| Internet connection | To fetch Maven/Hadoop dependencies from maven central or other repos. Required to download dependent software and source code. |

| JDK 1.8 | Hadoop is built on top of Java. We need JDK 1.8 to build and run Hadoop. |

| Maven 3.0 or later | Required for build Hadoop. |

| ProtocolBuffer 2.5.0 | Some Java classes will be generated based on ProtocolBuffer definitions. |

| CMake 3.1 or newer | For compile native C libs for HDFS, etc. |

| Python (optional) | For generation of docs using 'mvn site'. For this guide, we may ignore it. |

| zlib headers (optional) | If building native code bindings for zlib. For this guide, we may ignore it. |

| GnuWin32 or Git Bash | Unix command-line tools from GnuWin32 or Git Bash: sh, mkdir, rm, cp, tar, gzip. These tools must be present on your PATH. For easiness, we will use Git Bash as we will use it to checkout source code too. |

| Windows SDK 8.1 | Required if building CPU rate control for the container executor. *After I complete the whole build, I don't feel this is mandatory but will leave it here for now until I prove it to be 100% true. |

| Visual Studio 2010 Professional or Higher | Includes C++ compilers, etc. *Higher version of Visual Studio should also be okay. However we just follow the official instructions just in case. |

Now let's begin to install these dependencies one by one.

warning Alert - In the following steps, PowerShell commands are used to add environment variables. If you already have PATH user environment variable setup, I'd suggest you manually update PATH environment variable via the GUI tool.

infoYou can skip some of the following steps if you already have those tools setup.

Section I Installation Guide

Step by step installation guide.

Step 1 Install JDK 1.8

- Download JDK 1.8 from the following page:

- After download, please run the executable to install JDK.

In my system, JDK is installed at this location: C:\Program Files\Java\jdk1.8.0_241.

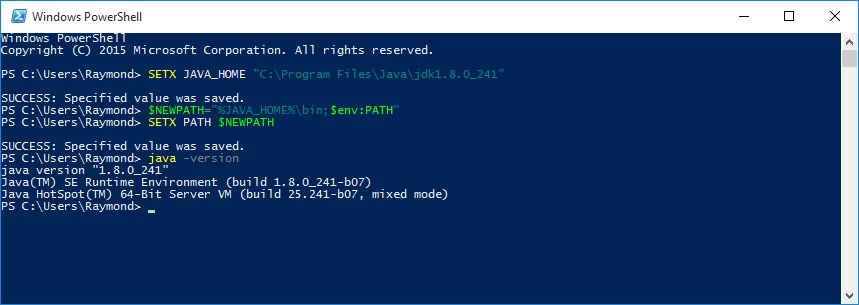

- Run the following commands in PowerShell to setup JAVA_HOME environment variable (remember to replace JDK path to your own path):

SETX JAVA_HOME "C:\Program Files\Java\jdk1.8.0_241"

$NEWPATH="%JAVA_HOME%\bin;$env:PATH"

SETX PATH $NEWPATH

- And then you can verify by running the following command in Command Prompt or PowerShell:

java -version

Your output should look like the following:

Step 2 Install Git Bash

- Download Windows Git Bash from the following website:

I am using 2.25.0 64-bit version of Git for Windows.

After download, click Run button to install Git. I'm installing Git to folder C:\Program Files\Git.

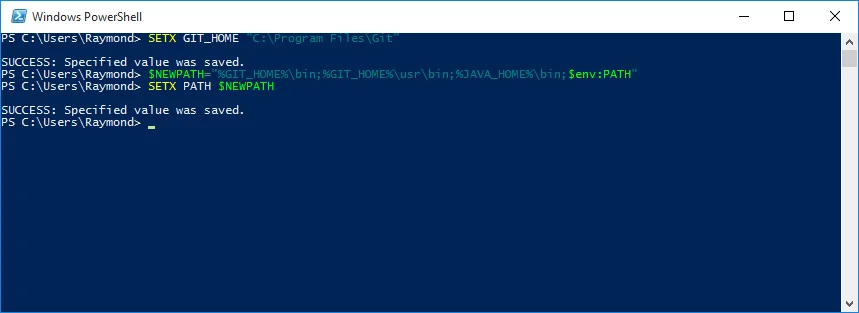

Run the following commands in PowerShell to setup environment variables (remember to change your Git installation location accordingly):

SETX GIT_HOME "C:\Program Files\Git"

$NEWPATH="%GIT_HOME%\bin;%GIT_HOME%\usr\bin;%JAVA_HOME%\bin;$env:PATH"

SETX PATH $NEWPATH

The output looks like the following:

- Open a new Command Prompt or PowerShell window to verify the Linux command lines are available: sh, mkdir, rm, cp, tar, gzip.

sh -version

mkdir

rm --help

tar --help

gzip --help

cp --help

bash

Make sure all the above commands runs successfully without error.

Step 3 Setup Maven

- Download Maven from the following website (choose Binary tar.gz archive from the Filessection on the web page) :

Downloading Apache Maven 3.6.3https://maven.apache.org/download.cgi

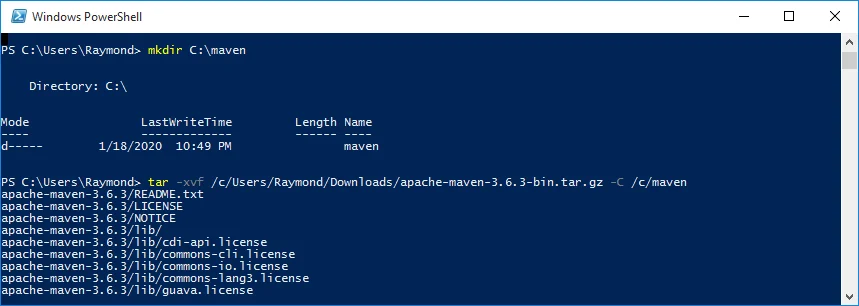

- Run the following commands in PowerShell to unzip the file to a installation folder (for my system, the folder is C:\maven):

mkdir C:\maven

tar -xvf /c/Users/Raymond/Downloads/apache-maven-3.6.3-bin.tar.gz -C /c/maven

Please remember to replace the highlighted path with your own binary path accordingly. Since tar is a Linux command and we need to convert the path accordingly.

For example:

| Windows Path | Linux Path |

|---|---|

| C:\Users\Raymond\Downloads\apache-maven-3.6.3-bin.tar.gz | /c/Users/Raymond/Downloads/apache-maven-3.6.3-bin.tar.gz |

| C:\maven | /c/maven |

The output looks like the following:

After this Maven is installed in folder in my system: C:\maven\apache-maven-3.6.3.

- Run the following command in PowerShell to setup MAVEN_HOME variable (please change the path accordingly):

SETX MAVEN_HOME C:\maven\apache-maven-3.6.3

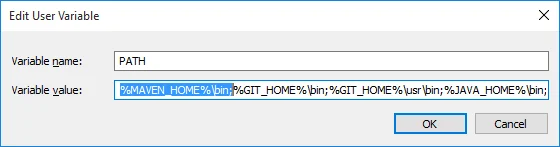

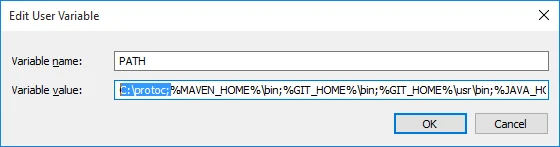

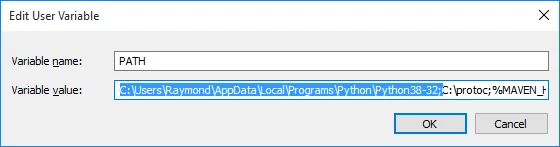

Add the following path to your user environment variable PATH:

%MAVEN_HOME%\bin;

Till now, you should have these additional paths added to PATH variable:

%MAVEN_HOME%\bin;%GIT_HOME%\bin;%GIT_HOME%\usr\bin;%JAVA_HOME%\bin;

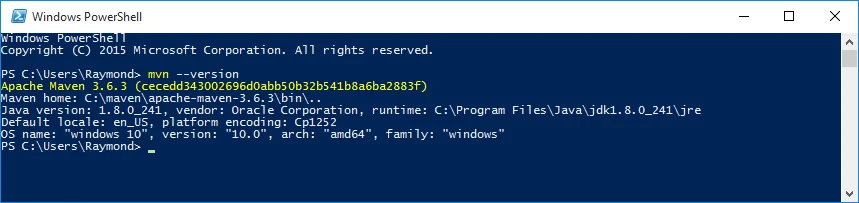

- Verify maven installation by running the following command in a new PowerShell window:

mvn --version

The output looks like the following screenshot:

- You can further configure maven local repository cache folder by editing settings.xml file in folder %MAVEN_HOME%\conf. By default, the packages will be downloaded to folder: ${user.home}/.m2/repository.

For this guide, I'll just use the default folder. In my system, the path is C:\Users\Raymond.m2\repository.

To avoid Maven build issues like 'The command line is too long.', let's create a symbolic link to point to this folder (remember to change to your own path accordingly):

mklink /J C:\mrepo C:\Users\Raymond\.m2\repository

And then update settings.xml file to configure local repository path:

<localRepository>C:\mrepo</localRepository>

Step 4 Install Protocol Buffer

- Download Protocol Buffer 2.5.0 from the following location:

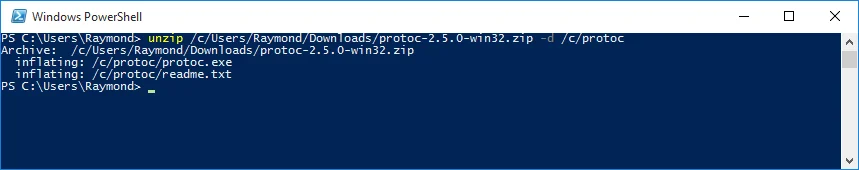

- Unzip the downloaded file to C:\protoc folder using the following commands in PowerShell:

mkdir C:\protoc

unzip /c/Users/Raymond/Downloads/protoc-2.5.0-win32.zip -d /c/protoc

Similar to the previous steps, please replace the paths accordingly to suit your environment.

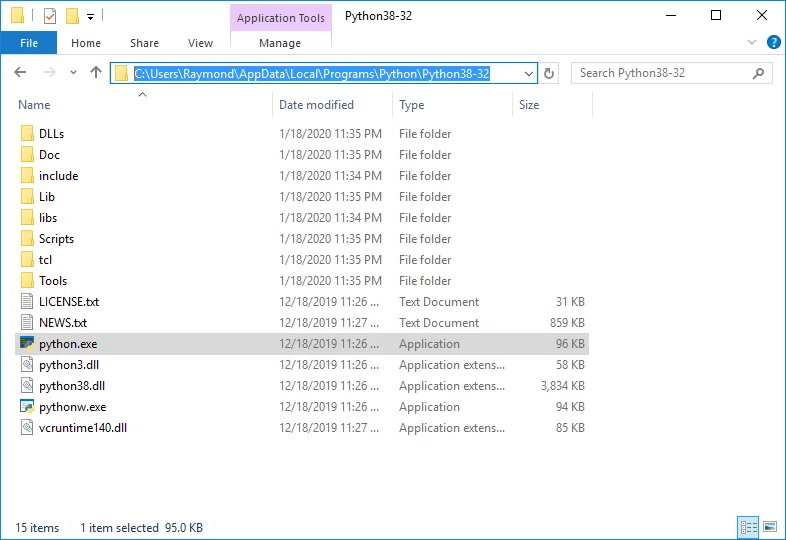

There are two files added to C:\protoc folder as the following screenshot shows:

- We also need to add protoc path (C:\protoc) to environment variable PATH:

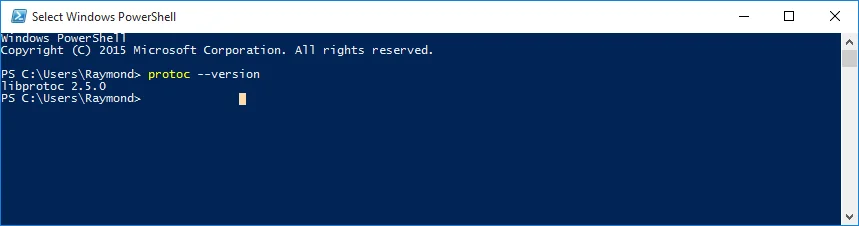

- Verify the environment variable by opening a new PowerShell or Command Prompt window and run the following command:

protoc --version

The output looks like the following:

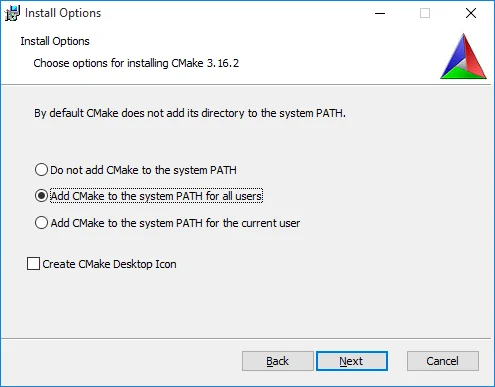

Step 5 Install CMake

Download CMake from this link: cmake-3.16.2-win64-x64.msi.

Double click the downloaded file cmake-3.16.2-win64-x64.msi to install this application.

Make sure you choose either Add CMake to the system PATH for all users orAdd CMake to the system PATH for the current user.

Alternatively, you can manually add it to PATH environment variable:

C:\Program Files\CMake\bin;C:\protoc;%MAVEN_HOME%\bin;%GIT_HOME%\bin;%GIT_HOME%\usr\bin;%JAVA_HOME%\bin;%PATH%

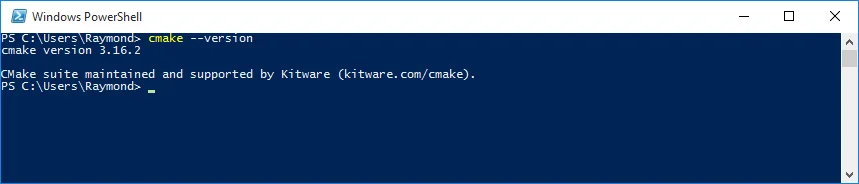

- Once you finish the installation, verify you can run cmake command in Command Prompt or PowerShell:

cmake --version

The output looks like the following screenshot:

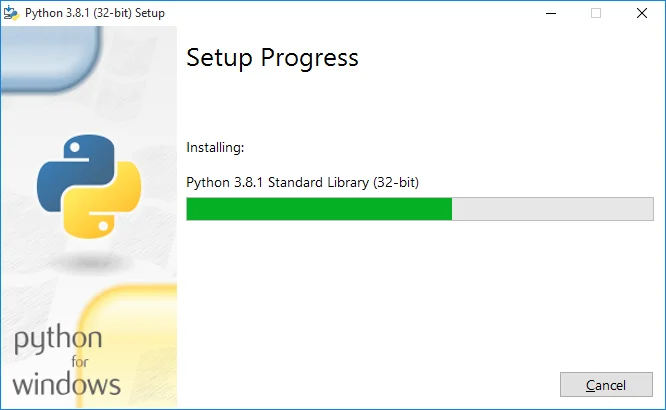

Step 6 Install Python

- Download and install python from this web page: https://www.python.org/downloads/.https://www.python.org/downloads/.

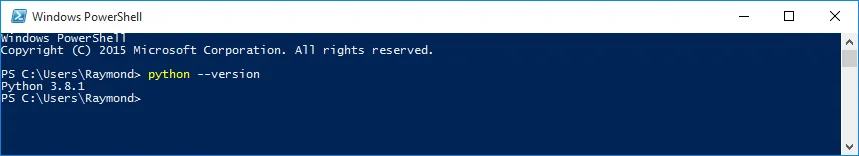

- Verify installation by running the following command in Command Prompt or PowerShell:

python --version

The output looks like the following:

If python command cannot be directly invoked, please check PATH environment variable to make sure Python installation path is added:

For example, in my environment Python is installed at the following location:

Thus path C:\Users\Raymond\AppData\Local\Programs\Python\Python38-32 is added to PATH variable.

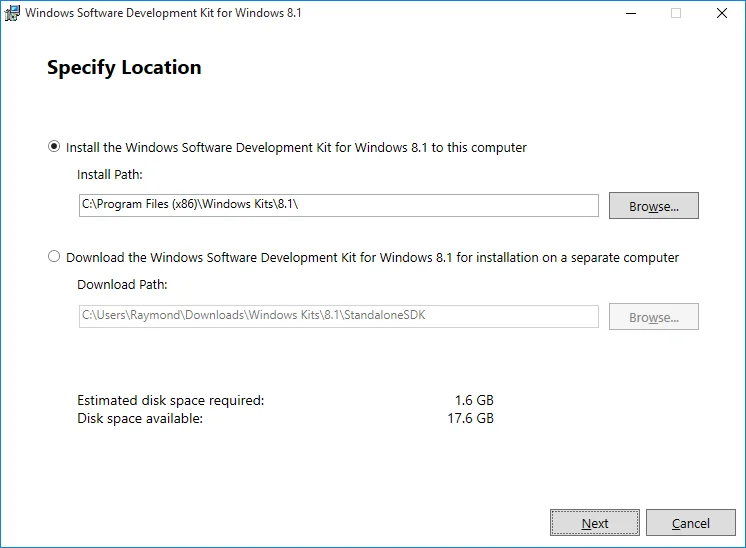

Step 7 Install Windows SDK 8.1.0

Download Windows SDK 8.1.0 from this web page: http://msdn.microsoft.com/en-us/windows/bg162891.aspx.

Click sdksetup.exe to start the installation. Follow the wizard to complete installation.

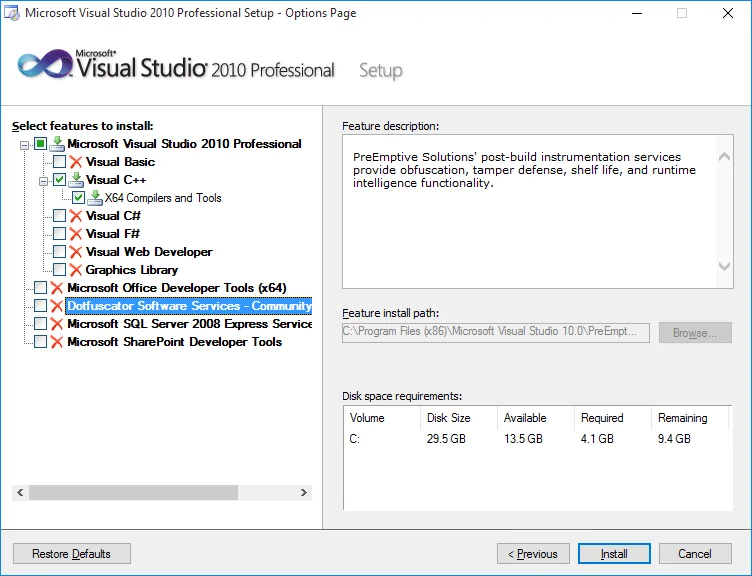

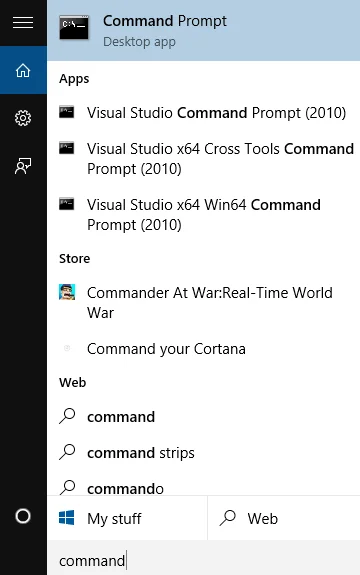

Step 8 Install Visual Studio 2010 Professional

- Download VS2010 from the following page (login is required):

https://my.visualstudio.com/Downloads?q=visual%20studio%202010&wt.mc_id=omsftvscom~older-downloadshttps://my.visualstudio.com/Downloads?q=visual%20studio%202010&wt.mc_id=o~msft~vscom~older-downloads

- Install VS2010 using the setup wizard. To save space, I only choose Visual C++ as we only need X64 Compilers and Toolsto compile C/C++ Windows native libs**.**

- After the installation is completed, there are a few more Command Prompt available to use. We will use x64 command prompt in some of the following steps.

Step 9 Setup a few more environment variables

- We need to setup two more variables based on the following logic:

@REM *************************************************

@REM JDK and these settings MUST MATCH

@REM

@REM 64-bit : Platform = x64, VCVARSPLAT = amd64

@REM

@REM 32-bit : Platform = Win32, VCVARSPLAT = x86

@REM

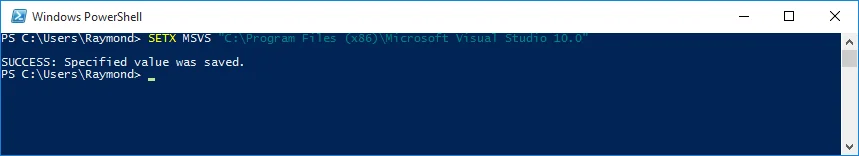

For my environment, it is 64 bit. Thus I need to run the following command in PowerShell or Command Prompt:

SETX Platform x64

SETX VCVARSPLAT amd64

The above screenshot is the output in my system.

- Setup Visual Studio environment variable by running the following command in PowerShell or Command Prompt:

SETX MSVS "C:\Program Files (x86)\Microsoft Visual Studio 10.0"

* C:\Program Files (x86)\Microsoft Visual Studio 10.0 is the path of my Visual Studio. Please change it accordingly based on your environment setup.

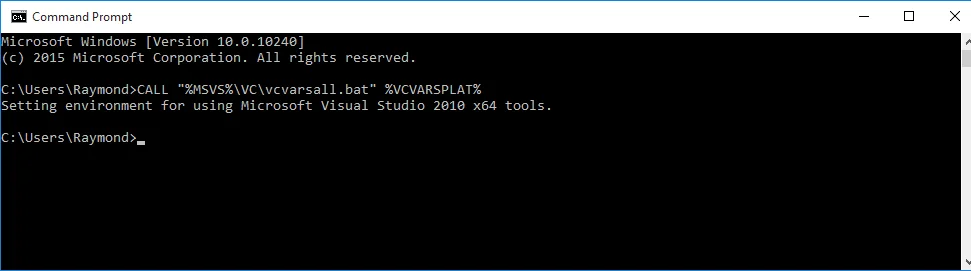

- Verify the setup by running the following command in Command Prompt:

CALL "%MSVS%\VC\vcvarsall.bat" %VCVARSPLAT%

The output looks like the following:

]

Step 10 Download source code

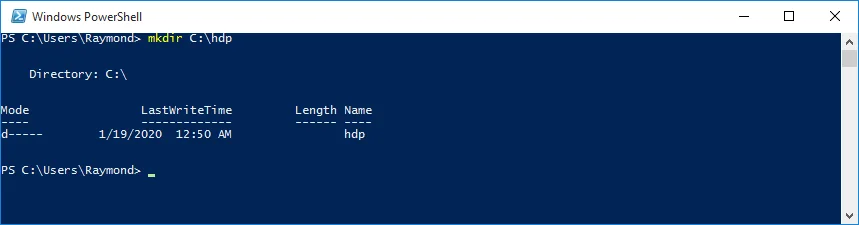

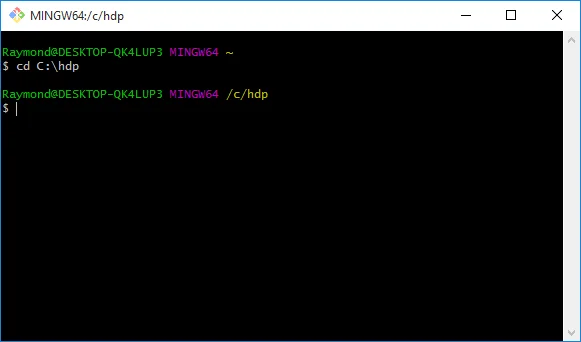

- Create a new folder C:\hdp using the following command:

mkdir C:\hdp

- Open Git Bash window (using Run as Administrator mode) and change directory to C:\hdp.

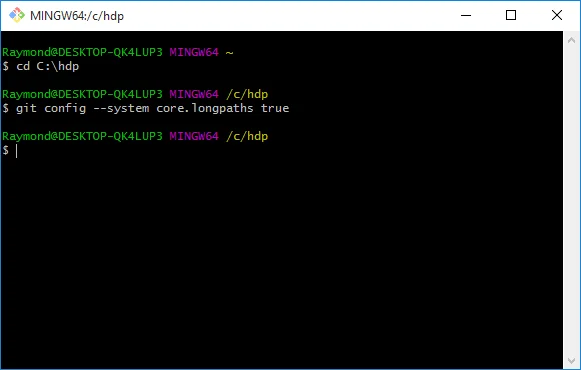

- Run the following command in Git Bash terminal to change git settings to allow long paths:

git config --system core.longpaths true

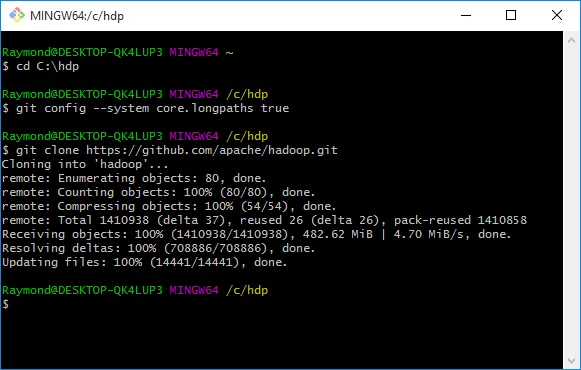

- Clone the repository by running the following command:

git clone https://github.com/apache/hadoop.git

It may take a few minutes as this repo is huge. Wait until the clone is completed successfully.

- Check out branch rel/release-3.2.1 as we will use source code in this branch to build:

cd hadoop/

git checkout rel/release-3.2.1

- Fix some C code for the HDFS native library.

As we are using Visual C++ 2010 to compile the C code, there is one source code file we need to manually update it otherwise it won't compile as VS 2010 does not support either declaration in for loop headers or mixed declarations and statements for C programs. Refer to Section II for more details about this issue.

Open file src\main\native\libhdfs-tests\test_libhdfs_threaded.c in a text editor and manually replace function static int doTestHdfsOperations(struct tlhThreadInfo *ti, hdfsFS fs,const struct tlhPaths *paths) with the following content:

static int doTestHdfsOperations(struct tlhThreadInfo *ti, hdfsFS fs,

const struct tlhPaths *paths)

{

char tmp[4096];

hdfsFile file;

int ret, expected, numEntries;

hdfsFileInfo *fileInfo;

struct hdfsReadStatistics *readStats = NULL;

struct hdfsHedgedReadMetrics *hedgedMetrics = NULL;

char invalid_path[] = "/some_invalid/path";

hdfsFileInfo * dirList;

char listDirTest[PATH_MAX];

int nFile;

char filename[PATH_MAX];

hdfsFS fs2 = NULL;

if (hdfsExists(fs, paths->prefix) == 0) {

EXPECT_ZERO(hdfsDelete(fs, paths->prefix, 1));

}

EXPECT_ZERO(hdfsCreateDirectory(fs, paths->prefix));

EXPECT_ZERO(doTestGetDefaultBlockSize(fs, paths->prefix));

/* There is no such directory.

* Check that errno is set to ENOENT

*/

EXPECT_NULL_WITH_ERRNO(hdfsListDirectory(fs, invalid_path, &numEntries), ENOENT);

/* There should be no entry in the directory. */

errno = EACCES; // see if errno is set to 0 on success

EXPECT_NULL_WITH_ERRNO(hdfsListDirectory(fs, paths->prefix, &numEntries), 0);

if (numEntries != 0) {

fprintf(stderr, "hdfsListDirectory set numEntries to "

"%d on empty directory.", numEntries);

return EIO;

}

/* There should not be any file to open for reading. */

EXPECT_NULL(hdfsOpenFile(fs, paths->file1, O_RDONLY, 0, 0, 0));

/* Check if the exceptions are stored in the TLS */

EXPECT_STR_CONTAINS(hdfsGetLastExceptionRootCause(),

"File does not exist");

EXPECT_STR_CONTAINS(hdfsGetLastExceptionStackTrace(),

"java.io.FileNotFoundException");

/* hdfsOpenFile should not accept mode = 3 */

EXPECT_NULL(hdfsOpenFile(fs, paths->file1, 3, 0, 0, 0));

file = hdfsOpenFile(fs, paths->file1, O_WRONLY, 0, 0, 0);

EXPECT_NONNULL(file);

/* TODO: implement writeFully and use it here */

expected = (int)strlen(paths->prefix);

ret = hdfsWrite(fs, file, paths->prefix, expected);

if (ret < 0) {

ret = errno;

fprintf(stderr, "hdfsWrite failed and set errno %d\n", ret);

return ret;

}

if (ret != expected) {

fprintf(stderr, "hdfsWrite was supposed to write %d bytes, but "

"it wrote %d\n", expected, ret);

return EIO;

}

EXPECT_ZERO(hdfsFlush(fs, file));

EXPECT_ZERO(hdfsHSync(fs, file));

EXPECT_ZERO(hdfsCloseFile(fs, file));

EXPECT_ZERO(doTestGetDefaultBlockSize(fs, paths->file1));

/* There should be 1 entry in the directory. */

dirList = hdfsListDirectory(fs, paths->prefix, &numEntries);

EXPECT_NONNULL(dirList);

if (numEntries != 1) {

fprintf(stderr, "hdfsListDirectory set numEntries to "

"%d on directory containing 1 file.", numEntries);

}

hdfsFreeFileInfo(dirList, numEntries);

/* Create many files for ListDirectory to page through */

strcpy(listDirTest, paths->prefix);

strcat(listDirTest, "/for_list_test/");

EXPECT_ZERO(hdfsCreateDirectory(fs, listDirTest));

for (nFile = 0; nFile < 10000; nFile++) {

snprintf(filename, PATH_MAX, "%s/many_files_%d", listDirTest, nFile);

file = hdfsOpenFile(fs, filename, O_WRONLY, 0, 0, 0);

EXPECT_NONNULL(file);

EXPECT_ZERO(hdfsCloseFile(fs, file));

}

dirList = hdfsListDirectory(fs, listDirTest, &numEntries);

EXPECT_NONNULL(dirList);

hdfsFreeFileInfo(dirList, numEntries);

if (numEntries != 10000) {

fprintf(stderr, "hdfsListDirectory set numEntries to "

"%d on directory containing 10000 files.", numEntries);

return EIO;

}

/* Let's re-open the file for reading */

file = hdfsOpenFile(fs, paths->file1, O_RDONLY, 0, 0, 0);

EXPECT_NONNULL(file);

EXPECT_ZERO(hdfsFileGetReadStatistics(file, &readStats));

errno = 0;

EXPECT_UINT64_EQ(UINT64_C(0), readStats->totalBytesRead);

EXPECT_UINT64_EQ(UINT64_C(0), readStats->totalLocalBytesRead);

EXPECT_UINT64_EQ(UINT64_C(0), readStats->totalShortCircuitBytesRead);

hdfsFileFreeReadStatistics(readStats);

/* Verify that we can retrieve the hedged read metrics */

EXPECT_ZERO(hdfsGetHedgedReadMetrics(fs, &hedgedMetrics));

errno = 0;

EXPECT_UINT64_EQ(UINT64_C(0), hedgedMetrics->hedgedReadOps);

EXPECT_UINT64_EQ(UINT64_C(0), hedgedMetrics->hedgedReadOpsWin);

EXPECT_UINT64_EQ(UINT64_C(0), hedgedMetrics->hedgedReadOpsInCurThread);

hdfsFreeHedgedReadMetrics(hedgedMetrics);

/* TODO: implement readFully and use it here */

ret = hdfsRead(fs, file, tmp, sizeof(tmp));

if (ret < 0) {

ret = errno;

fprintf(stderr, "hdfsRead failed and set errno %d\n", ret);

return ret;

}

if (ret != expected) {

fprintf(stderr, "hdfsRead was supposed to read %d bytes, but "

"it read %d\n", ret, expected);

return EIO;

}

EXPECT_ZERO(hdfsFileGetReadStatistics(file, &readStats));

errno = 0;

EXPECT_UINT64_EQ((uint64_t)expected, readStats->totalBytesRead);

hdfsFileFreeReadStatistics(readStats);

EXPECT_ZERO(hdfsFileClearReadStatistics(file));

EXPECT_ZERO(hdfsFileGetReadStatistics(file, &readStats));

EXPECT_UINT64_EQ((uint64_t)0, readStats->totalBytesRead);

hdfsFileFreeReadStatistics(readStats);

EXPECT_ZERO(memcmp(paths->prefix, tmp, expected));

EXPECT_ZERO(hdfsCloseFile(fs, file));

//Non-recursive delete fails

EXPECT_NONZERO(hdfsDelete(fs, paths->prefix, 0));

EXPECT_ZERO(hdfsCopy(fs, paths->file1, fs, paths->file2));

EXPECT_ZERO(hdfsChown(fs, paths->file2, NULL, NULL));

EXPECT_ZERO(hdfsChown(fs, paths->file2, NULL, "doop"));

fileInfo = hdfsGetPathInfo(fs, paths->file2);

EXPECT_NONNULL(fileInfo);

EXPECT_ZERO(strcmp("doop", fileInfo->mGroup));

EXPECT_ZERO(hdfsFileIsEncrypted(fileInfo));

hdfsFreeFileInfo(fileInfo, 1);

EXPECT_ZERO(hdfsChown(fs, paths->file2, "ha", "doop2"));

fileInfo = hdfsGetPathInfo(fs, paths->file2);

EXPECT_NONNULL(fileInfo);

EXPECT_ZERO(strcmp("ha", fileInfo->mOwner));

EXPECT_ZERO(strcmp("doop2", fileInfo->mGroup));

hdfsFreeFileInfo(fileInfo, 1);

EXPECT_ZERO(hdfsChown(fs, paths->file2, "ha2", NULL));

fileInfo = hdfsGetPathInfo(fs, paths->file2);

EXPECT_NONNULL(fileInfo);

EXPECT_ZERO(strcmp("ha2", fileInfo->mOwner));

EXPECT_ZERO(strcmp("doop2", fileInfo->mGroup));

hdfsFreeFileInfo(fileInfo, 1);

snprintf(tmp, sizeof(tmp), "%s/nonexistent-file-name", paths->prefix);

EXPECT_NEGATIVE_ONE_WITH_ERRNO(hdfsChown(fs, tmp, "ha3", NULL), ENOENT);

//Test case: File does not exist

EXPECT_NULL_WITH_ERRNO(hdfsGetPathInfo(fs, invalid_path), ENOENT);

//Test case: No permission to access parent directory

EXPECT_ZERO(hdfsChmod(fs, paths->prefix, 0));

//reconnect as user "SomeGuy" and verify that we get permission errors

EXPECT_ZERO(hdfsSingleNameNodeConnect(tlhCluster, &fs2, "SomeGuy"));

EXPECT_NULL_WITH_ERRNO(hdfsGetPathInfo(fs2, paths->file2), EACCES);

EXPECT_ZERO(hdfsDisconnect(fs2));

return 0;

}

Step 11 Review before build

In the previous steps, we've installed all the required tools, setup necessary environment variables and also checkout the code for build. The following environment variables are added:

- GIT_HOME = C:\Program Files\Git

- JAVA_HOME = C:\Program Files\Java\jdk1.8.0_241

- MAVEN_HOME = C:\maven\apache-maven-3.6.3

- MSVS = C:\Program Files (x86)\Microsoft Visual Studio 10.0

- Platform = x64

- VCVARSPLAT = amd64

- PATH = C:\Users\Raymond\AppData\Local\Programs\Python\Python38-32;C:\protoc;%MAVEN_HOME%\bin;%GIT_HOME%\bin;%GIT_HOME%\usr\bin;%JAVA_HOME%\bin;%PATH%

Now let's start building.

Step 12 Use Maven to build Hadoop 3.2.1

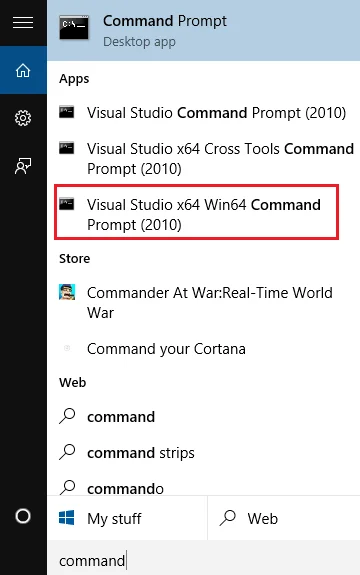

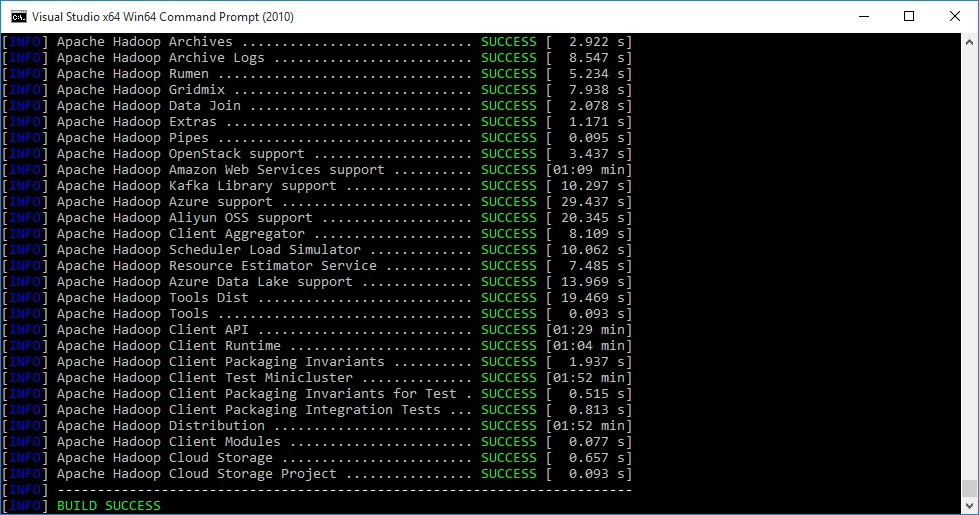

- Open Visual Studio x64 Win64 Command Prompt (2010)

- Change the directory to Hadoop source code directory by running the following command in the windows opened in step 1):

cd C:/hdp/hadoop

- Run the following Maven command to start build:

mvn package -Pdist -DskipTests -Dtar -Dmaven.javadoc.skip=true

The above command line will skip running tests and skip generating Java document to save time.

infoSyntax reference: Build distribution with native code : mvn package [-Pdist][-Pdocs][-Psrc][-Dtar][-Dmaven.javadoc.skip=true] For more information about Maven CLI, please refer to Maven CLI Options Reference

warning We cannot use option -Dmaven.test.skip=true otherwise build for hadoop-yarn-project will fail. Refer to YARN-4084 for more details.

The build may take long time as there are many dependent packages need to be downloaded and many projects need to built. The packages download is only required for the first time when you run the build as packages will be cached in local maven repository based on your configuration. In my system, the packages are downloaded to C:\Users\Raymond.m2\repository.

Wait until the build completes.

- When the build completes successfully, Maven will show the summary of all the projects.

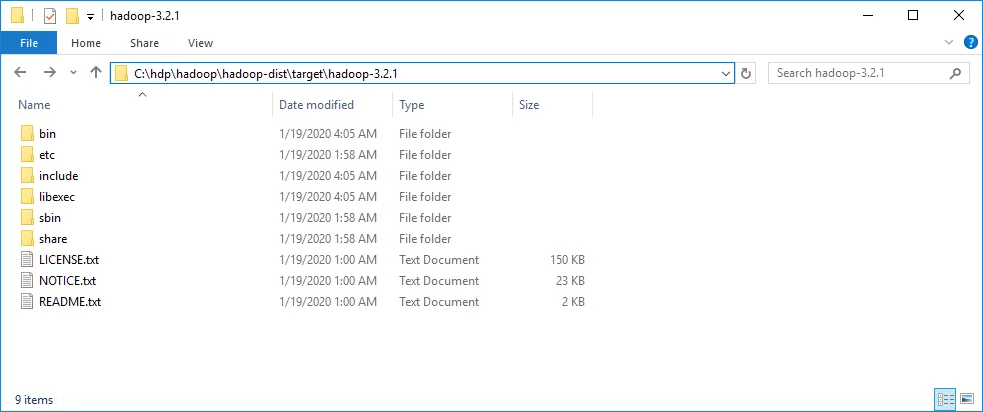

- The artefacts are published in the destination folder: C:\hdp\hadoop\hadoop-dist\target\hadoop-3.2.1.

check Congratulations! You've now successfully build Hadoop 3.2.1 on Windows 10.

Section II Issue Fixes

This section documents some issues encountered when I was doing the build.

Resolve issues

As part of the build process, I did encounter a few minor issues. The following sections document the resolutions for your reference.

The command line is too long

When I was running build initially with the following command:

mvn package -Pdist,native-win -DskipTests -Dmaven.test.skip=true -Dtar -Dmaven.javadoc.skip=true

The process failed at hadoop-common project build with error 'The command line is too long'.

To resolve this issue, I simply created a symbolic link for Maven local repository:

mklink /J C:\mrepo C:\Users\Raymond\.m2\repository

The details are already updated in Step 3.

Apache Hadoop HDFS Native Client Build failure

This project built failed due to some coding issues in source file: src\main\native\libhdfs-tests\test_libhdfs_threaded.c.

Detailed errors:

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(163): error C2143: syntax error : missing ';' before 'type' [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(164): error C2065: 'invalid_path' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(210): error C2143: syntax error : missing ';' before 'type' [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(211): error C2065: 'dirList' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(216): error C2065: 'dirList' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(219): error C2143: syntax error : missing ';' before 'type' [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(220): error C2065: 'listDirTest' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(221): error C2065: 'listDirTest' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(222): error C2065: 'listDirTest' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(223): error C2143: syntax error : missing ';' before 'type' [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(224): error C2065: 'nFile' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(224): error C2065: 'nFile' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(224): error C2065: 'nFile' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(226): error C2065: 'listDirTest' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(226): error C2065: 'nFile' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(231): error C2065: 'dirList' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(231): error C2065: 'listDirTest' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(232): error C2065: 'dirList' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(233): error C2065: 'dirList' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(312): error C2065: 'invalid_path' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(317): error C2143: syntax error : missing ';' before 'type' [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(318): error C2065: 'fs2' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(319): error C2065: 'fs2' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

[exec] ..\..\..\..\..\src\main\native\libhdfs-tests\test_libhdfs_threaded.c(320): error C2065: 'fs2' : undeclared identifier [C:\hdp\hadoop\hadoop-hdfs-project\hadoop-hdfs-native-client\target\native\main\native\libhdfs\test_libhdfs_threaded_hdfs_static.vcxproj]

This issue occurred because VS C++ 2010 compiler doesn't support declare variables in the middle of the function or in the loop for C code.

Thus, to fix it, I need to temporarily remove the variable definition section to the beginning of the function:

static int doTestHdfsOperations(struct tlhThreadInfo *ti, hdfsFS fs,

const struct tlhPaths *paths)

{

char tmp[4096];

hdfsFile file;

int ret, expected, numEntries;

hdfsFileInfo *fileInfo;

struct hdfsReadStatistics *readStats = NULL;

struct hdfsHedgedReadMetrics *hedgedMetrics = NULL;

char invalid_path[] = "/some_invalid/path";

hdfsFileInfo * dirList;

char listDirTest[PATH_MAX];

int nFile;

char filename[PATH_MAX];

hdfsFS fs2 = NULL;

if (hdfsExists(fs, paths->prefix) == 0) {

EXPECT_ZERO(hdfsDelete(fs, paths->prefix, 1));

}

EXPECT_ZERO(hdfsCreateDirectory(fs, paths->prefix));

EXPECT_ZERO(doTestGetDefaultBlockSize(fs, paths->prefix));

/* There is no such directory.

* Check that errno is set to ENOENT

*/

EXPECT_NULL_WITH_ERRNO(hdfsListDirectory(fs, invalid_path, &numEntries), ENOENT);

/* There should be no entry in the directory. */

errno = EACCES; // see if errno is set to 0 on success

EXPECT_NULL_WITH_ERRNO(hdfsListDirectory(fs, paths->prefix, &numEntries), 0);

if (numEntries != 0) {

fprintf(stderr, "hdfsListDirectory set numEntries to "

"%d on empty directory.", numEntries);

return EIO;

}

/* There should not be any file to open for reading. */

EXPECT_NULL(hdfsOpenFile(fs, paths->file1, O_RDONLY, 0, 0, 0));

/* Check if the exceptions are stored in the TLS */

EXPECT_STR_CONTAINS(hdfsGetLastExceptionRootCause(),

"File does not exist");

EXPECT_STR_CONTAINS(hdfsGetLastExceptionStackTrace(),

"java.io.FileNotFoundException");

/* hdfsOpenFile should not accept mode = 3 */

EXPECT_NULL(hdfsOpenFile(fs, paths->file1, 3, 0, 0, 0));

file = hdfsOpenFile(fs, paths->file1, O_WRONLY, 0, 0, 0);

EXPECT_NONNULL(file);

/* TODO: implement writeFully and use it here */

expected = (int)strlen(paths->prefix);

ret = hdfsWrite(fs, file, paths->prefix, expected);

if (ret < 0) {

ret = errno;

fprintf(stderr, "hdfsWrite failed and set errno %d\n", ret);

return ret;

}

if (ret != expected) {

fprintf(stderr, "hdfsWrite was supposed to write %d bytes, but "

"it wrote %d\n", expected, ret);

return EIO;

}

EXPECT_ZERO(hdfsFlush(fs, file));

EXPECT_ZERO(hdfsHSync(fs, file));

EXPECT_ZERO(hdfsCloseFile(fs, file));

EXPECT_ZERO(doTestGetDefaultBlockSize(fs, paths->file1));

/* There should be 1 entry in the directory. */

dirList = hdfsListDirectory(fs, paths->prefix, &numEntries);

EXPECT_NONNULL(dirList);

if (numEntries != 1) {

fprintf(stderr, "hdfsListDirectory set numEntries to "

"%d on directory containing 1 file.", numEntries);

}

hdfsFreeFileInfo(dirList, numEntries);

/* Create many files for ListDirectory to page through */

strcpy(listDirTest, paths->prefix);

strcat(listDirTest, "/for_list_test/");

EXPECT_ZERO(hdfsCreateDirectory(fs, listDirTest));

for (nFile = 0; nFile < 10000; nFile++) {

snprintf(filename, PATH_MAX, "%s/many_files_%d", listDirTest, nFile);

file = hdfsOpenFile(fs, filename, O_WRONLY, 0, 0, 0);

EXPECT_NONNULL(file);

EXPECT_ZERO(hdfsCloseFile(fs, file));

}

dirList = hdfsListDirectory(fs, listDirTest, &numEntries);

EXPECT_NONNULL(dirList);

hdfsFreeFileInfo(dirList, numEntries);

if (numEntries != 10000) {

fprintf(stderr, "hdfsListDirectory set numEntries to "

"%d on directory containing 10000 files.", numEntries);

return EIO;

}

/* Let's re-open the file for reading */

file = hdfsOpenFile(fs, paths->file1, O_RDONLY, 0, 0, 0);

EXPECT_NONNULL(file);

EXPECT_ZERO(hdfsFileGetReadStatistics(file, &readStats));

errno = 0;

EXPECT_UINT64_EQ(UINT64_C(0), readStats->totalBytesRead);

EXPECT_UINT64_EQ(UINT64_C(0), readStats->totalLocalBytesRead);

EXPECT_UINT64_EQ(UINT64_C(0), readStats->totalShortCircuitBytesRead);

hdfsFileFreeReadStatistics(readStats);

/* Verify that we can retrieve the hedged read metrics */

EXPECT_ZERO(hdfsGetHedgedReadMetrics(fs, &hedgedMetrics));

errno = 0;

EXPECT_UINT64_EQ(UINT64_C(0), hedgedMetrics->hedgedReadOps);

EXPECT_UINT64_EQ(UINT64_C(0), hedgedMetrics->hedgedReadOpsWin);

EXPECT_UINT64_EQ(UINT64_C(0), hedgedMetrics->hedgedReadOpsInCurThread);

hdfsFreeHedgedReadMetrics(hedgedMetrics);

/* TODO: implement readFully and use it here */

ret = hdfsRead(fs, file, tmp, sizeof(tmp));

if (ret < 0) {

ret = errno;

fprintf(stderr, "hdfsRead failed and set errno %d\n", ret);

return ret;

}

if (ret != expected) {

fprintf(stderr, "hdfsRead was supposed to read %d bytes, but "

"it read %d\n", ret, expected);

return EIO;

}

EXPECT_ZERO(hdfsFileGetReadStatistics(file, &readStats));

errno = 0;

EXPECT_UINT64_EQ((uint64_t)expected, readStats->totalBytesRead);

hdfsFileFreeReadStatistics(readStats);

EXPECT_ZERO(hdfsFileClearReadStatistics(file));

EXPECT_ZERO(hdfsFileGetReadStatistics(file, &readStats));

EXPECT_UINT64_EQ((uint64_t)0, readStats->totalBytesRead);

hdfsFileFreeReadStatistics(readStats);

EXPECT_ZERO(memcmp(paths->prefix, tmp, expected));

EXPECT_ZERO(hdfsCloseFile(fs, file));

//Non-recursive delete fails

EXPECT_NONZERO(hdfsDelete(fs, paths->prefix, 0));

EXPECT_ZERO(hdfsCopy(fs, paths->file1, fs, paths->file2));

EXPECT_ZERO(hdfsChown(fs, paths->file2, NULL, NULL));

EXPECT_ZERO(hdfsChown(fs, paths->file2, NULL, "doop"));

fileInfo = hdfsGetPathInfo(fs, paths->file2);

EXPECT_NONNULL(fileInfo);

EXPECT_ZERO(strcmp("doop", fileInfo->mGroup));

EXPECT_ZERO(hdfsFileIsEncrypted(fileInfo));

hdfsFreeFileInfo(fileInfo, 1);

EXPECT_ZERO(hdfsChown(fs, paths->file2, "ha", "doop2"));

fileInfo = hdfsGetPathInfo(fs, paths->file2);

EXPECT_NONNULL(fileInfo);

EXPECT_ZERO(strcmp("ha", fileInfo->mOwner));

EXPECT_ZERO(strcmp("doop2", fileInfo->mGroup));

hdfsFreeFileInfo(fileInfo, 1);

EXPECT_ZERO(hdfsChown(fs, paths->file2, "ha2", NULL));

fileInfo = hdfsGetPathInfo(fs, paths->file2);

EXPECT_NONNULL(fileInfo);

EXPECT_ZERO(strcmp("ha2", fileInfo->mOwner));

EXPECT_ZERO(strcmp("doop2", fileInfo->mGroup));

hdfsFreeFileInfo(fileInfo, 1);

snprintf(tmp, sizeof(tmp), "%s/nonexistent-file-name", paths->prefix);

EXPECT_NEGATIVE_ONE_WITH_ERRNO(hdfsChown(fs, tmp, "ha3", NULL), ENOENT);

//Test case: File does not exist

EXPECT_NULL_WITH_ERRNO(hdfsGetPathInfo(fs, invalid_path), ENOENT);

//Test case: No permission to access parent directory

EXPECT_ZERO(hdfsChmod(fs, paths->prefix, 0));

//reconnect as user "SomeGuy" and verify that we get permission errors

EXPECT_ZERO(hdfsSingleNameNodeConnect(tlhCluster, &fs2, "SomeGuy"));

EXPECT_NULL_WITH_ERRNO(hdfsGetPathInfo(fs2, paths->file2), EACCES);

EXPECT_ZERO(hdfsDisconnect(fs2));

return 0;

}

Section III Summary

Summary about this guide.

Summary

Above comprehensive step by step instructions are provided for building Hadoop release 3.2.1. The setup takes a little bit time because there are many tools required to build though they can be categories into the following groups:

- Java related tools: JDK, Maven

- C/C++ and Windows related tools: Windows SDK, Visual C++, Cmake

- Linux style CLIs: Git Bash

- Other framework dependencies: Protocol Buffer

- Aux tools: Python for generating document and also run some checks (once built, we can run Running compatibility checks with checkcompatibility.py).

Different Hadoop versions require different versions of these frameworks/tools. With this guide line, you can easily change slightly to:

- build Hadoop 2.x on Windows

- build Hadoop 3.0 on Windows

- build Hadoop 3.2.2/3.3.0 on Windows

- or build with the latest code base

These approaches can also be easily extended to build other Apache frameworks too since many of them are Java based.

If you encounter any issue while following this guide, please post a comment here and I will try my best to help.